1. Introduction

Language documentation promotes the recording of diverse genres of speech (Woodbury 2003), including genres that cross boundaries into other scholarly disciplines (Holton 2018). An often overlooked genre is musical surrogate speech: the use of musical instruments to transmit linguistic messages. A related practice, whistled speech, is also a speech surrogate, though it has received more scholarly attention (e.g., Meyer 2015; Rialland 2005), and I will only address it as it relates to musical surrogate speech in this paper. The reasons these practices are overlooked are likely varied. Perhaps they do not seem “linguistic” enough if there are no words articulated by human vocal tracts or hands. Perhaps researchers are not musically inclined and feel out of their depth. Or researchers may simply be unaware of the phenomenon in general and never thought to ask if these form a part of the community’s linguistic practices.

This article is a clarion call to the language documentation community to seek out and make records of these traditions around the world. As I will detail below, musical surrogate languages offer unique insights into linguistic structure and the human language faculty, and they often fill unique communicative niches, shaped by cultural, spiritual, and physical considerations. The recognition and conceptualization of linguistic structure that is required to encode it on a musical instrument makes these traditions a form of ethnoscience: linguistic analysis from an emic perspective. They also tend to be highly endangered practices (McPherson 2018; Seifart et al. 2018; Sicoli 2016; Struthers-Young 2022), even if the spoken language itself is stable (Eze & McPherson 2025). This makes it even more important to create records of them now. The creation of these documentary materials has enormous potential to revolutionize how we think about language and music as interrelated forms of human expression and how people develop speech technologies (and relatedly, emic theories of language) in response to a wide range of physical, cultural, and spiritual challenges. Moreover, the structure, acquisition, usage, and comprehension of surrogate languages touch on nearly every aspect of linguistic theory in unique and potentially transformative ways. Thus, I suggest that such research on musical surrogate languages and whistled speech should be considered a new subfield of linguistics: surrogate linguistics.

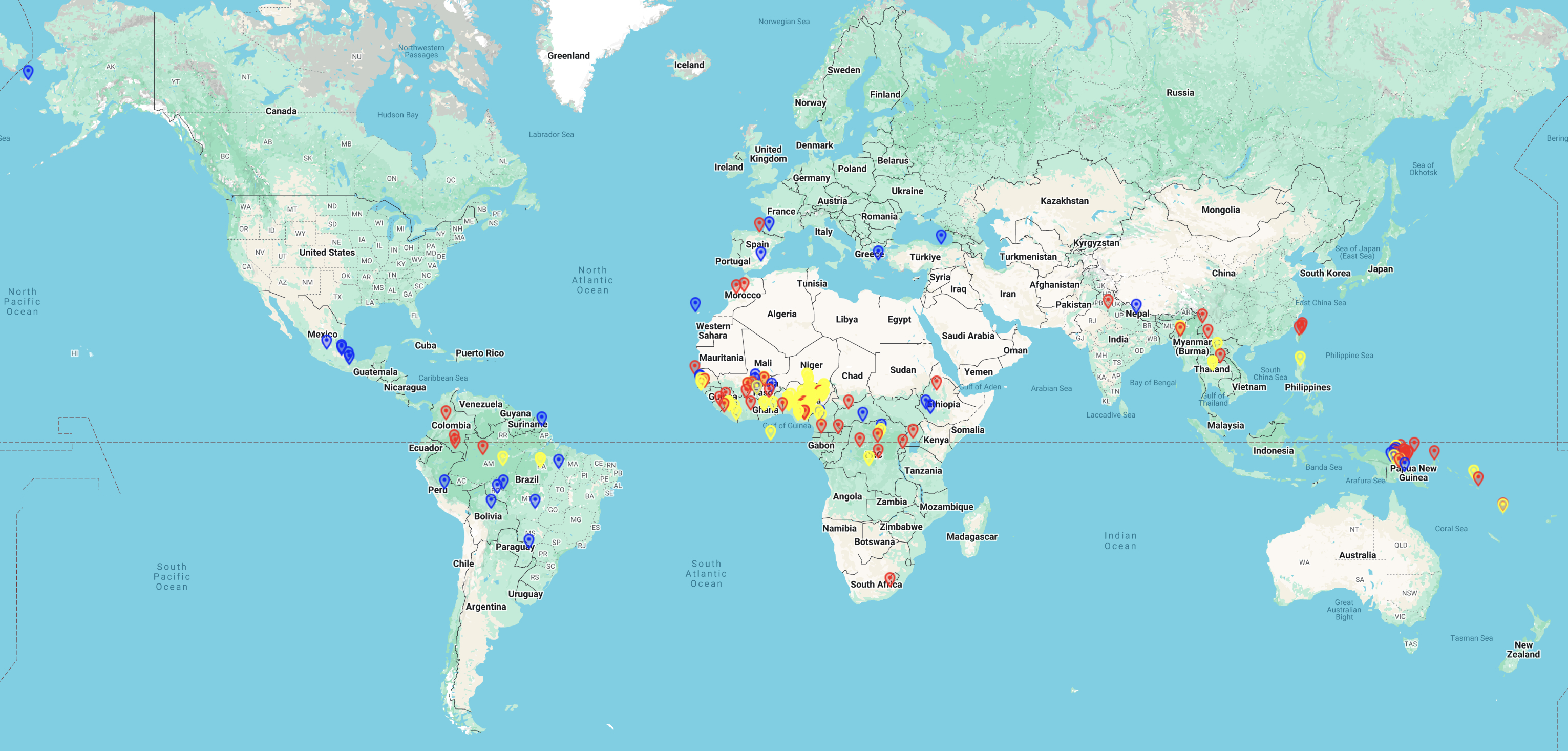

From what we know, musical surrogate languages are found all around the world on a vast array of instruments, especially but not exclusively among speakers of tonal languages (McPherson et al. 2025). It is likely that many more traditions are entirely undocumented, particularly in West/Central Africa and Papua New Guinea. Indeed, in 2025, I launched a survey of Nigerian surrogate languages in collaboration with Dr. Samuel Akinbo (University of Toronto), and virtually every language we have asked about either has active musical surrogates or is remembered to have had such systems in the past. The vast majority of the systems we are learning about are completely undocumented and figure nowhere in the published literature. My hope in writing this paper is that by approaching this as a scholarly community we will be able to catalogue and record many more musical surrogate languages around the globe before it is too late.

The remainder of the paper is structured as follows. In Section 2, I define and exemplify musical surrogate languages, including indisputable cases as well as cases at the margins. Section 3 addresses the question of why linguists should be interested in musical surrogate languages, and why we should be including them in our documentary corpora. Section 4 sketches out a brief “how-to” guide, with discussion of recording, elicitation, transcription, ethics, and archiving. Section 5 concludes.

2. What are musical surrogate languages?

Musical surrogate languages are systems in which linguistic content is encoded in the sounds of a musical instrument in order to transmit or communicate a message. The term “linguistic content” is chosen explicitly to encompass the sounds of language, morphological or syntactic structure, and of course meaning/semantics; the definition is deliberately broad in that a system need not encode all of these levels of linguistic structure to qualify as a musical surrogate. This diverges from Nketia (1971), who treats only those systems that emulate speech sounds (see Section 2.1 below) as true speech surrogates. As James (2021) points out, it is often a blurry line at best between a truly phonemic system and one based on arbitrary musical signs, and many systems combine elements of the two. Hence, I will cast a broad net, which is arguably even more important when documenting systems whose structural underpinnings have yet to be explored and analyzed.

The definition I offer here aligns with one of two categories that Niles (2010) proposes for speech surrogate systems more broadly: what he calls “instrumental systems” (Niles 2010: 2). The other category he proposes are “somatic systems” that use only the body itself, which would include whistled speech but also singing and chanting. One could debate whether singing and chanting should be considered surrogates or simply a different mode of language use, with divergences in phonological, syntactic, and lexical structure from everyday speech. Indeed, going further, this debate could be extended to whistled speech too, especially for non-tonal languages where the oral articulators are in largely the same position that they would be for speech but the airstream mechanism differs (see e.g. Busnel & Classe 1976; Cowan 1972). However, as Meyer (2015) notes, whistling is “not easily identified as a speech act by untrained and unaware speakers” (3) and “requires more training than… other speech registers” (2). It thus fits more squarely with a definition of speech surrogates as being the transfer of linguistic form to what is traditionally not a linguistic modality (i.e., not [only] the vocal tract and/or hands).

Meyer & Manfredi (2025) use the term “non-voiced auxiliary speech” to combine whistled speech with only those instrumental traditions that emulate phonemic aspects of speech, thus excluding instrumental surrogates grounded in arbitrary relationships between sound and meaning (i.e. the “lexical ideograph” systems discussed in Section 2.2 below, which are common in Oceania, the part of world focused on by Niles 2010). Sebeok & Umiker-Sebeok (1976) likewise combine whistled speech and instrumental speech in their seminal volume Speech surrogates: Drum and whistle systems, but they do not exclude lexical ideograph systems. It is clear that whistling and musical surrogate systems have many elements in common, and indeed, some whistled traditions can be carried out either somatically (with the hands and mouth) or with the aid of an external tool (e.g., whistled Spanish of the Canary Islands vs. in Andalusia, where a small wood or clay whistle known as a pito is used, Meyer 2015). Their definition thus draws the line in a different place than Niles (2010), excluding some somatic systems like chanting and singing while including whistled speech.

Slicing the domain yet another way, one could propose a class of “musical speech registers” that would include instrumental traditions as well as singing, which have in common their perception as music, to the exclusion of whistled speech, which is generally not intended to be musical. A final distinction could be between systems that reproduce a linguistic contrast in a different way from how it is regularly articulated vs. those that do not. In the domain of whistled languages, this would distinguish non-tonal whistling from tonal whistling, since in the latter, fundamental frequency (f0) contrasts normally produced by the larynx are instead transferred to tongue position (what Meyer & Manfredi 2025 call “articulatory transfer”). A similar distinction could be made for musical instruments like the jaw harp that use the oral cavity as a resonator. These instruments are likewise used for speech surrogacy in both non-tonal and tonal languages, but the mechanism for reproducing speech sounds differs. For instance, in a non-tonal language like Altai (Levin & Süzükei 2006), the jaw harp acts simply as the external sound source for otherwise regularly articulated speech (i.e., the musician mouths the words into the jaw harp), whereas in Hmong, the backbone of the jaw harp’s speech surrogacy is the language’s tone system reproduced not by the larynx but through oral harmonics, with a secondary consonantal contrast reproduced in the plucking pattern of the instrument. In other words, the articulation of Hmong surrogate speech on the jaw harp differs radically from regular spoken language, which is not the case for Altai.

In any case, it is clear that there exist many interrelated phenomena of speech modes that fall outside the bounds of regular day-to-day speech.1 In what follows I will focus only on musical surrogate languages that use an instrument to create new pathways for expressing linguistic form and meaning, and especially those in which speech is not merely mediated with an instrument as an artificial larynx or vocoder. (Systems at the margins of this definition are discussed in Section 2.3.)

A final definitional note concerns my use of the term “speech” in the discussions that follow. I use this to refer to all genres and registers of spoken language, as opposed to signed language. This includes poetry, conversational speech, announcements, chants, etc. The relationship between speech genre and musical surrogate languages are discussed in Section 3.5.

While the best known musical surrogate languages may be “talking drums”, this phrase can refer to numerous kinds of percussion instruments in unrelated languages and areas of the world. These include tension drums such as Yorùbá dùndún or gángan (Akinbo 2019; Durojaye et al. 2021), sets of barrel drums such as Akan atumpan (Bagemihl 1988; McPherson & Obiri-Yeboah 2024; Nketia 1963, 1971), or slit-log drums such as the Bora manguaré (Seifart et al. 2018) or the Bauro tamtam (Snyders 1976). Instruments used for speech surrogacy go far beyond (drummed) percussion. They include flutes such as Igbo oja (Carter-Ényì et al. 2021; Eze & McPherson 2025), clarinets such as Gavião totoráp (Meyer & Moore 2021), fiddles like Hmong xim xaus (Poss 2012), xylophones such as Toussian balafon (Struthers-Young 2022), jaw harps such as Huli híriyùla (Pugh-Kitingan 1977), mouth bows such as Huli gáwa (Pugh-Kitingan 177), and horns such as Asante mmɛntia (Kaminski 2008; McPherson & Obiri-Yeboah 2024), among others. For the past several years, a team of students and I have been compiling a database of speech surrogates around the world, the Online Database of Speech Surrogates or ODSS (McPherson et al. 2025). We currently catalogue over 449 surrogate traditions (including whistled speech) based on 168 languages spoken in 48 countries, and this number continues to grow as we conduct further surveys and fieldwork.2 See Figure 1 for a screenshot of the current map view showing the distribution of speech surrogate traditions in the database.

Stern’s (1957) classic typology of speech surrogates divided them into three categories based on how they encode speech. “Abridging” systems are phonemic and iconic: the sounds of speech are emulated on the instrument; these systems are also referred to in the literature as “emulated speech” (Seifart et al. 2018). “Encoding” systems are phonemic but not iconic: the sounds of speech form the basis of the musical surrogate, but they do not need to physically resemble them. Perhaps unsurprisingly, there are very few cases in the literature offered as “encoding” rather than “abridgement”, since what would be the purpose of basing a system on sounds but in a totally arbitrary manner?3 Catlin (1982) refers to Hmong qeej surrogacy as encoding, given the polyphonic nature of the music, but as I will detail in Section 2.1, there is a spectrum of how closely a phonemic system emulates the sounds of speech. Thus, I group together both “abridging” and “encoding” systems under the heading of “phonemic” speech surrogates. Stern’s third category, “lexical ideogram” systems, stand in contrast to these: such systems pay no heed to the sounds of language but rather represent concepts directly through arbitrary patterns. I will discuss these systems in Section 2.2. Finally, Section 2.3 discusses systems that are technically included under the definition of musical surrogate languages developed here, but which have features that stretch this definition in different ways.

2.1 Phonemic systems

The most uncontroversial musical surrogate languages are those that emulate, to a varying degree, the sounds of the spoken language through the notes, rhythms, and timbres of a musical instrument.4 Whether the sounds in question are segments or tones, I refer to these systems as “phonemic”. In every known case, musical surrogate languages reduce the number of contrasts in the spoken language to a smaller set, with the most typical contrasts encoded being prosodic ones: tone and speech rhythm. I illustrate this principle with a brief description of the Sambla balafon surrogate system; for deeper analysis and discussion, see McPherson (2018).

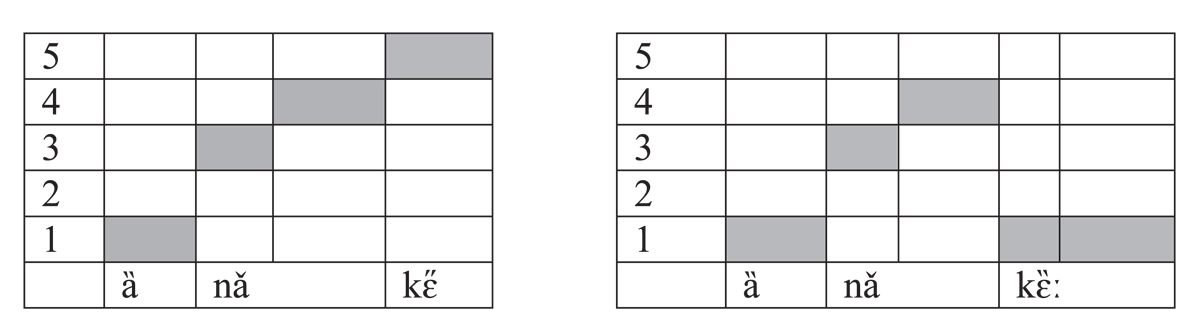

The Sambla balafon is a resonator xylophone from southwestern Burkina Faso, used to encode the community’s language, Seenku (McPherson 2020). Seenku is a Mande language with robust segmental inventory and a complex tone system, but balanced by a largely mono- and sesquisyllabic vocabulary (Matisoff 1990). In other words, Seenku words are short, but they are packed full of phonemic contrasts. However, the balafon eschews all vowel and consonant contrasts, encoding only tone and a binary syllable structure contrast: simplex (CV) and complex (CVV, CVː, CəCV, CəCVV, CəCVː), with CVN syllables varying in their treatment on the balafon between simplex and complex. Simplex syllables (with a level tone) receive one note strike, corresponding to the tone, while complex syllables (and any contour tone, including those on simplex syllables) receive a flam stroke. (In percussive terminology, this is two note strikes in close succession; in melodic traditions, this might be considered a grace note.) Example (1) contrasts the single strike used for a short CV syllable kɛ̋ (1a) with the flam used for a CVː syllable kɛ̏ː (1b).5 A transcription of these examples is shown in Figure 2, with a the strike corresponding to kɛ̋ in the lefthand figure (corresponding to 1a) and the flam strikes corresponding to kɛ̏ː in the righthand figure (corresponding to 1b). A difference in tone encoding is also audible here, which will be discussed below.

- (1)

- a.

- ȁ

- 3sg

- nǎ

- prosp

- kɛ̋

- go

- ‘s/he will go’

- b.

- ȁ

- 3sg

- nǎ

- prosp

- kɛ̏ː

- dry.up

- ‘it will dry up’

Mamadou Diabate plays ‘she will go’ on the Sambla balafon.

Mamadou Diabate plays ‘it will dry’ on the Sambla balafon.

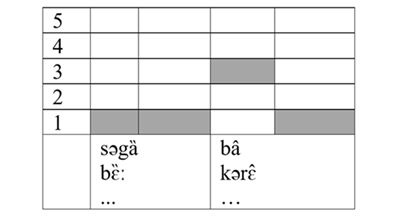

The neutralization created by this binary syllable shape encoding principle can be seen in example (2), where the same musical surrogate encoding could represent many different words, depending upon the context. Two possibilities are given here, with their balafon transcription in Figure 3.

- (2)

- a.

- səgȁ

- sheep

- bâ

- male

- ‘ram’

- b.

- bɛ̏ː

- pig

- kərɛ̂

- man

- ‘boar’

Mamadou Diabate plays ‘boar/ram’ on the Sambla balafon.

The flam on the same note could indicate either a sesquisyllabic word like səgȁ ‘sheep’ or a long vowel like bɛ̏ː ‘pig’. The flam on different notes indicates a contour tone, but this contour could be on either a simplex syllable like bâ ‘male’ or a complex syllable like kərɛ̂ ‘man’ (or on a long vowel, not shown here).

Turning to tone encoding, Seenku’s tone system boasts four phonemic levels (Extra-low, Low, High, and Super-high), which can combine to create numerous contour tones. These tones are represented by notes in the balafon’s pentatonic scale, with the tone-note mapping dependent upon musical mode (McPherson 2018). The verb in (1a) is a S(uper-high)-toned syllable and thus played on the highest note of the scale, while the verb in (1b) is an X(tra-low)-toned syllable and thus played on the lowest note of the scale; this X tone can also be seen on the 3sg pronoun ȁ and in the head nouns in (2). Contour tones are played by playing each component tone as a flam. The auxiliary verb nǎ in (1) is a LS rising tone, and the note corresponding to each tone is struck in close succession, while both bâ and kərɛ̂ in (2) show a HX falling tone. The final X is audibly played on the same note as the initial X tone of səgȁ and bɛ̏ː.

Through this combination of speech rhythm (dictated by syllable shape) and tone encoding, the Sambla balafon player is able to say any Seenku utterance on the instrument. Video 1 illustrates fluent Sambla balafon speech with subtitles.

Sadama Diabate and Sabwee Diabate demonstrate speech surrogacy on the Sambla balafon. This video was recorded by the author in 2017 in the village of Toronsso, Burkina Faso.

While the Sambla balafon neutralizes all segmental contrasts, this is not always true for musical surrogate languages. Two Yorùbá drumming traditions, the dùndún tension drum (Akinbo 2019; Durojaye et al. 2021) and the bàtá drum ensemble (González & Oludare 2021; Villepastour 2010), are also based primarily upon tone and speech rhythm. But in addition, they encode a binary vowel height contrast known emically as “soft” vs. “intense”, which corresponds to the linguistic distinction between high and non-high. This emic distinction appears to be grounded in intrinsic amplitude, as high vowels have a lower intrinsic amplitude than non-high vowels (Lehiste and Peterson 1959).6 On the dùndún, this binary vowel distinction is encoded as a difference in amplitude, with a softer stroke for soft/high vowels and a louder stroke for intense/non-high vowels. Among mid vowels, Villepastour (2010) notes that there is some variation in whether they are treated as soft or intense. On the bàtá drum ensemble, this difference is encoded more categorically, with intense vowels involving the addition of a sharp strike on a double-sided barrel drum’s second membrane with a leather strap. This likewise iconically increases the amplitude of the strike combination.

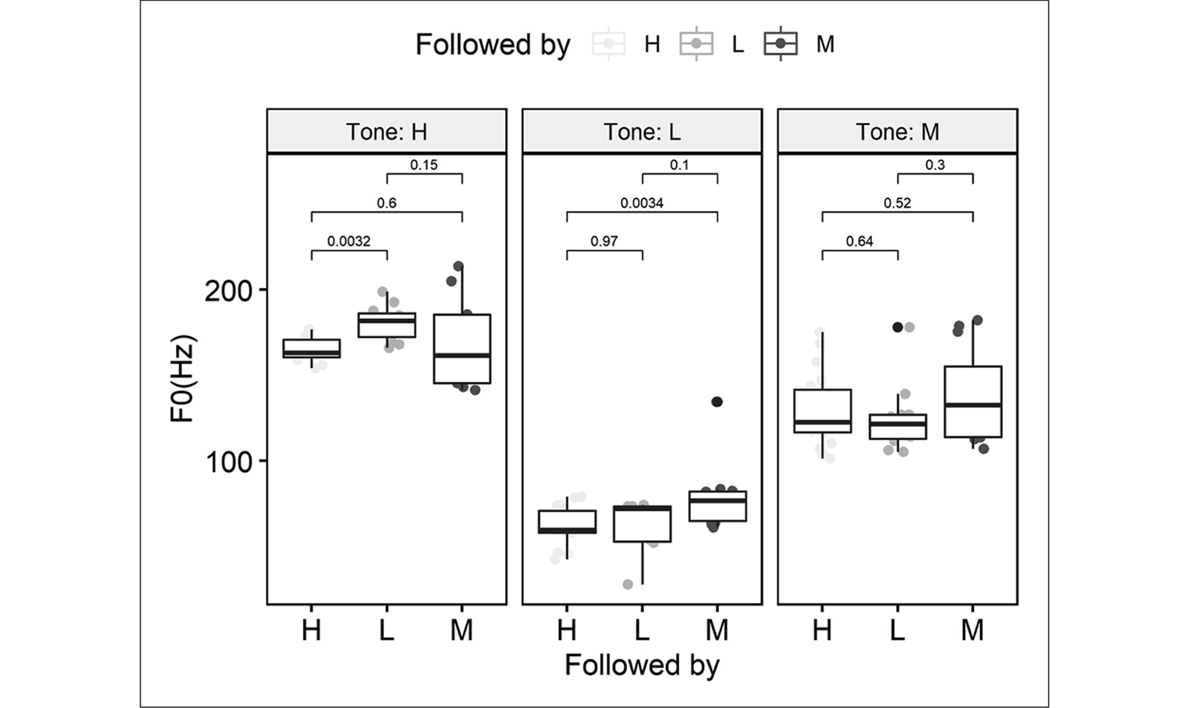

These two Yorùbá traditions demonstrate two further ways in which a musical instrument can encode tone. As we saw above, the Sambla balafon has a fixed musical scale, and tones map onto these fixed notes. The dùndún, on the other hand, is a tension drum, meaning that it can produce gradient pitch distinctions depending upon the tension applied by the player’s arm to the cords that attach the membranes to the body of the drum. Akinbo (2021) demonstrates that this continuous pitch mechanism is able to encode phonetic detail of Yorùbá tone, including raising of H tone before L and lowering of L before H, in addition to closely emulating contour tones created by tonal carryover in H-L and L-H tone sequences. These results can be seen in Figure 4.

In contrast, the bàtá drum ensemble is not a pitched instrument in a strict sense. Rather, the drums produce a range of timbres depending upon how they are struck. The three tones of Yorùbá are mapped to different timbral combinations, though it should be noted that the timbre used for L tone (an open resonating strike) has a lower spectral center, i.e., a lower overall frequency, than that used for H tone (a sharp, high frequency slap mute).

Neither the dùndún nor the bàtá typically encode any consonants, with the exception of the phoneme /r/, which can at times be played with a double strike on both instruments (González & Oludare 2021). The different sonic nature of these two musical surrogate systems encoding the same Yorùbá material can be heard in the audio corresponding to example (3), included with permission from Amanda Villepastour (text from Villepastour 2010: 152).

- (3)

- Èṣù Láaróyè

- Èṣù tí bẹ́lẹ́kún sunkún

- Ẹlẹ́kún ń sunkún

- Láaróyè (ń)yọ̀

- ‘Èṣù Láaróyè (praise name)’

- ‘Èṣù who weeps with the bereaved’

- ‘While the bereaved is weeping’

- ‘Láaróyè is rejoicing’

An excerpt of praise poetry (oriki) played on the Yorùbá bàtá, corresponding to the text in example (3).

An excerpt of praise poetry (oriki) played on the Yorùbá dùndún, corresponding to the text in example (3).

Neither the dùndún nor the bàtá typically encode any consonants, with the exception of the phoneme /r/, which can at times be played with a double strike on both instruments (González & Oludare 2021). Indeed, most phonemic systems I am aware of encode little (e.g., Yorùbá drums) or no (e.g., Sambla balafon) segmental information. An exception is the Hmong ncas, a brass jaw harp traditionally used for courtship (Catlin 1982). In an ongoing study with my collaborators Neng Thao and Ford Springer, the ncas was found to encode information on vowel quality and consonants in addition to tone, though the number of contrastive dimensions for both is greatly reduced. This ability is thanks to the unique nature of jaw harps, which use the oral cavity as a resonator to amplify and attenuate the rich harmonics produced by plucking the instrument. Because it uses the oral cavity, the filter is the same as that used for speech. However, as mentioned in the introduction to Section 2, there is a distinction between the use of jaw harps for tonal and non-tonal languages. For non-tonal languages, the jaw harp is literally an external larynx, a vocoder, and speech is articulated normally in the vocal tract. For tonal languages like Hmong, however, the tone contrast remains primary, and these tones are produced not by laryngeal f0 but by selectively amplifying harmonics of the f0 produced by the vibration of the instrument by changing the configuration of the oral cavity.7 While this might seem to preclude the articulation of vowels, we have found some minor front-back distinctions across some but not all tone categories, suggesting that the articulation of at least some harmonics can accommodate front-back movement of the tongue. Consonants are not articulated with normal speech gestures, but rather are reduced to a binary contrast between those that receive a pluck on the instrument and those that do not. Interestingly, this distinction does not neatly track the sonorant vs. obstruent distinction noted in Rialland’s (2005) work on Hmong whistling, with voiceless sibilants receiving a pluck but all other voiceless fricatives and all voiced fricatives receiving no pluck. An example of Hmong ncas surrogacy can be seen in Video 2.

Xiong Thor plays a love song on the Hmong ncas (jaw harp)

All of the examples thus far have been drawn from tonal languages, with good reason, seeing as phonemic musical surrogate languages are far more common among tonal languages than non-tonal ones (James 2021). An exception would be the use of jaw harps among non-tonal languages such as Ifugao (Blench & Campos 2010), Altai (Levin & Süzükei 2006), or Khmu (Proschan 1994; see also Nikolsky 2020). (I return to these cases in Section 2.3.) We saw that the segmental contrast most likely to be encoded in musical surrogate speech for tonal languages was vowel height, and this appears to hold true for non-tonal systems as well. For example, Snyders (1969/1976) describes a slit-log drumming system for Bauro, a language of the Solomon Islands. Like better described tonal slit-log drumming systems of Central Africa (Carrington 1949) or the Amazon (Seifart et al. 2018), the Bauro slit log drum produces two pitches, one on either lip of the slit log. Rather than mapping these high and low pitches to high and low tone, however, the Bauro system instead maps the low to pitch to the low vowel /a/ and the high pitch to all non-low vowels (/i, e, o, u, ê/), as shown in the following example, reproduced from Snyders (1969/1976: 1297).8 L and H indicate low- and high-toned drum strokes, placed above their corresponding vowel to make room for a gloss:

- (4)

- L L L H __L

- Ha-ha-ga-ngi-si-a

- make.resonate

- L

- na

- art

- H H

- go-go

- drum

- _L H

- i-a-ni

- this

- L L L

- ta-nga-go

- arrive?

- L

- na

- art

- ‘I am beating the drum [for you]…’9

The underscore indicates positions where a H drum stroke might be expected for the vowel /i/, but where none is found. It appears that there is variation in how vowel-vowel sequences such as ia or ai are treated in Bauro drummed speech.

Whether vowel height is a privileged contrast in other non-tonal systems remains to be seen with future documentation.

2.2 Lexical ideogram systems

If we define musical surrogate languages as systems of communication that encode linguistic content in the sounds of a musical instrument, then it is not strictly necessary that these messages sound like speech. Lexical ideogram systems encode words, concepts, and sometimes entire messages through arbitrary musical sequences, but their linguistic content is still understood by listeners. These systems are sometimes referred to as “signal drums” (Burridge 1959; Nketia 1958), and indeed, lexical ideogram systems are to the best of our knowledge most common on drums, whether membranophones or struck idiophones like slit-log drums.

The Bauro drum discussed in Section 2.1 is an interesting case of a phonemic slit-log drumming system in Melanesia. Most documented systems, like slit-log garamut drums in Papua New Guinea, are lexical ideogram systems. Detailed description of these systems and documentation of their signals remain scant, especially as it relates to linguistic structure, likely because these systems have been historically treated as only tenuously linguistic in nature, but see Niles (2010) for an excellent overview of existing work. This is a shame, as we may be missing opportunities to analyze, for instance, how concepts are strung together and the extent to which this mirrors or diverges from the syntactic structure of the spoken language.

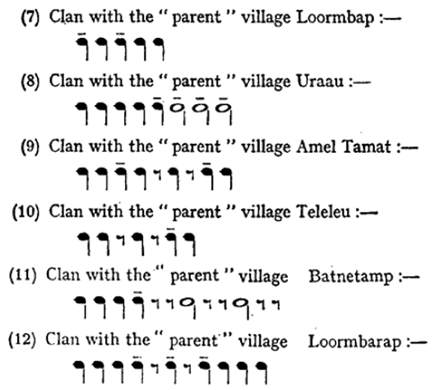

I will offer here brief illustrations of two slit-log drumming systems from Melanesia that have been described in some detail, though audio-video documentation is lacking, as they are both older studies. First, Deacon (1934/1976) describes slit-log drumming in Malekula, Vanuatu. Deacon notes that beating these slit-log drums (which he terms “gongs”), creates “a very efficient ‘gong language’ by means of which they are able to communicate with their fellows in distant villages, for every important object or happening of every day life has its motif, and even time can, to a limited extent, be expressed in the same way” (Deacon 1976: 1207). The examples in Figure 5 show a selection of “motifs” corresponding to different clan names. I have explicitly chosen this excerpt to include both the village of Loormbap and Loormbarap, which show us that there is no discernible relationship between the rhythm played and the spoken names of villages.

More complex messages can be sent by combining different signals. For instance, Deacon describes a rhythm known by the name iuswus ngileo, whose meaning is roughly “where is…” or “is it at your place?” Interestingly, even the spoken name of this rhythm is arbitrary (or at the very least, so archaic as to be uninterpretable by speakers at the time). This rhythm is then followed by a rhythm that refers to what has been lost, such naai tamap, which refers to a certain grade of pig.

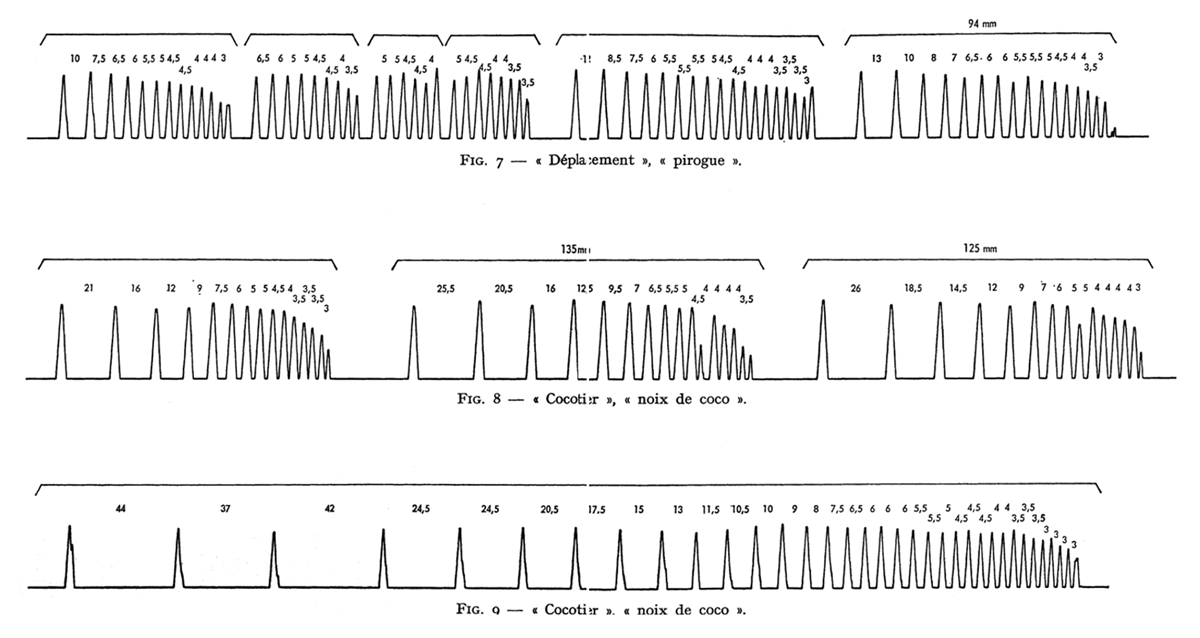

From the description in Deacon (1934/1976), only one side of these slit-log drums is beaten, thus only one pitch is produced, unlike the Bauro drumming, where each side of the slit produces a different pitch. The same situation is found for the Kwoma slit-log drum known locally as mee (Whiting & Reed 1938; Zemp & Kaufmann 1976), or in Papua New Guinea’s lingua franca, Tok Pisin, garamut. Like the Malekula system, arbitrary drum sequences differing only in their rhythm/spacing of strikes stand for concepts such as clan names, commands to come or go, nouns, or whole announcements like “a man is sick” or “a man has died”. Interestingly, Zemp & Kaufmann note that it is not the absolute number of strikes that characterizes a sign (this number can vary) but rather the temporal relation between the strokes and the overall duration of the message. Thus, the signals for “journey/canoe” (top line in Figure 6) and “coconut” (bottom two lines in Figure 6) both consist of a series of strokes that increase in velocity, but the strokes begin closer together for “journey/canoe” and the overall length of the message is shorter than for “coconut”, which begins with more widely spaced strokes and lasts considerably longer (though still of variable duration, as can be seen by comparing the middle and bottom lines).

As noted in Section 2, Nketia (1971) excludes such “signal drumming” from musical surrogate languages, given the lack of a concrete relationship between the sounds of speech and the musical signal. Seifart et al.’s (2018) designation of Bora slit-log drumming as “emulated speech” would likewise exclude these systems, as the speech signal is not clearly emulated. Niles (2010), on the other hand, clearly includes lexical ideogram systems in his definition of musical surrogate languages. I am very much in agreement with this inclusion and would argue (as does James 2021) that we would be remiss to exclude these systems from our documentation of musical surrogate languages, since in fact many otherwise phonemic systems include cases of lexical ideograms, and even disambiguation strategies that are ostensibly based on speech sounds can result in an arbitrary musical sequence that differs from regular spoken language.

A simple example of a lexical ideogram in an otherwise phonemic system can be found on the Sambla balafon. Though most messages transparently encode the speech sounds of Seenku, a common message is an alternating minor third (the exact number of strokes can vary, as in Kwoma) that means something like “thank you” or “correct”, when someone has understood and appropriately responded to a message (McPherson 2018). This lexical ideogram stands out from regular surrogate speech in making use of the note of the scale corresponding to roughly a minor third in Western musical tradition, which is otherwise avoided in regular Sambla surrogate speech. Similar kinds of lexical ideograms are often found as opening and closing elements in a drummed message, for instance in Lokele (Carrington 1949/1976). In short, to gain a holistic understanding of a phonemic system like the Sambla balafon, we cannot exclude lexical ideogram elements, and to better understand how lexical ideogram elements work in a musical communication system, we likewise need to document and study them in cases where they form the basis of the system. Description and documentation of these lexical ideogram systems are sorely lacking, and I urge anyone working in an area like Papua New Guinea that has been known to have such systems to ask about them and document whatever knowledge might remain as these brilliant long distance communication systems fall into disuse.

A more ambiguous case of arbitrariness in an otherwise phonemic system is the use of a disambiguation strategy known as “enphrasing”, attested in a number of different surrogate traditions. Reducing speech to only tone and rhythm produces a large number of homophonous words, as exemplified in (2) above for the Sambla balafon. To resolve the ambiguity, in some traditions, words are placed in conventional longer phrases that are tonally distinct from one another. For instance, in Lokele (Carrington 1949), likɔndɔ ‘plantain’ and lomata ‘manioc’ are both trisyllabic L-toned words that would played the same way on the drums. To make matters worse, they are both starch crops, and so simple linguistic context would be unlikely to differentiate them. To solve the problem, likɔndɔ is always enphrased as likɔndɔ líbotúmbela ‘plantain to be propped up’ and lomata as lomata otíkala kóndo ‘manioc remaining in fallow land’. The tonal structure of these two longer phrases is distinct, and so the correct word can be identified. Although these enphrasings are played phonemically, by encoding the tones associated with the words in the phrase, the phrases themselves become conventional and arbitrary. A listener would have to know, for instance, that the tonal sequence L-L-L-H-L-H-L-L maps to ‘plantain’, via ‘plantain to be propped up’. Thus we cannot use arbitrariness alone as a yardstick for whether a system should be seen as phonemic (a “true” speech surrogate) or as lexical ideogrammatic (“signal drumming”). This kind of enphrasing is also seen in Bora drumming in the Amazon (Seifart et al. 2018), along with conventionalized morphological markings to distinguish nouns from verbs; more details are provided in Section 3.3.

2.3 Systems at the margins

As we have seen, scholars differ in how they define speech surrogates or musical surrogate languages, sometimes excluding lexical ideogram systems, sometimes excluding vocal music, or in the case of my definition, excluding whistled speech. But there is a core set of traditions that everyone agrees are musical surrogate systems: those that use a musical instrument to reproduce at least some phonemic aspects of more-or-less regular speech (for more on genre, see Section 3.5). This core includes long-distance drumming traditions like Bora (Seifart et al. 2018), the Yorùbá dùndún (Akinbo 2019; Durojaye et al. 2021; González and Oludare 2021), as well as the Sambla balafon (McPherson 2019). But as we step away from this center, there are many musical surrogate languages whose messages are more poetic in nature, adding yet another musical or aesthetic layer to the encoding of speech, such as the many Hmong speech surrogates (Catlin 1982; Poss 2012), Gavião flutes, mouth bows, and clarinets (Meyer & Moore 2021), and the Igbo ọ́jà (Eze & McPherson 2025).10 At the periphery, we find two sets of practices that push the boundaries of what can be considered speech surrogacy altogether: instrumental renditions of vocal music and traditions where speech is fully articulated with the vocal tract, with the exception of the larynx, which in turn is replaced by an instrument. My working definition of “systems in which linguistic content is encoded in the sounds of an instrument in order to communicate a message” would include both of these kinds of systems at the margins, but as I will show, they operate in somewhat different ways and may tell us less about how speech is mapped to music and how people conceptualize their linguistic categories than the core surrogates do.

Many speech surrogacy traditions like the Sambla balafon have a direct “speech mode” (what was detailed above) as well as a “singing mode”, where the instrument is conceived of as singing the lyrics of songs. In the case of the Sambla balafon, these are songs that can also be sung vocally, and the instrument reproduces the sung melodies; in other words, the resulting notes are not created from a strict linguistic mapping, as is found in the speech mode (McPherson 2018), but rather after being passed through a filter of vocal composition. This first step of relating language to music is more a question of (tonal) text-setting rather than a process of speech surrogacy per se (e.g., Ladd & Kirby 2020; McPherson & Ryan 2018; Schellenberg 2012). Instrumental renderings of vocal music can similarly be found on the pion, a side-blown flute. The practice is recognized by the Sambla community as “singing” the lyrics of songs, and in that sense, it is a form of instrumental communication of linguistic content where the melodies are linguistically informed through relatively strict tone-melody mapping (McPherson & James 2021). However, if these traditions are considered speech surrogates, which seems to be their conceptualization within the community, then should instrumental versions of Western songs be considered speech surrogates as well? Or perhaps closer to the pion, what about Carnatic music of South India, where an instrument like a violin often mimics vocal music (Viraraghavan & Sankaran 2023), with repercussions for bowing patterns, among other stylistic choices (Charumathi Raghuraman, personal communication, 2025-09-25)? Neither of these cases is ever discussed as speech surrogacy, yet there are clear parallels. As Niles (2010: 5) also attests, it is an extremely difficult task to draw clean lines through what is in reality a cloud of interrelated musico-linguistic systems, and depending upon how we define musical surrogate languages, these marginal systems may in fact be found in most musical cultures.

The other set of systems at the margins involves the full production of speech, through either whispering or mouthing words, but with an external rather than internal sound source. One case with more commonalities with other musical surrogate languages is the inanga chuchotée (Fales 2017). The inanga is a zither used by Kirundi speakers to accompany songs, but the singer whispers rather than sings the words (thus the French chuchoter ‘to whisper’). The vocal melodies, which, like the Sambla pion, are tightly related to the tones of Kirundi, are transferred to the strings of the zither. Fales (2017) describes how the right combination of performance elements (e.g. a quiet setting, the singer’s mouth close to the strings) produces an auditory illusion where the f0 of the strings is decoupled from the instrument and perceived as being part of the human voice. This tradition is at face value simple vocal music accompanied by an instrument, but the fact that the perceived pitch/tones of the words are produced not by the larynx but by an external instrument brings it under the umbrella of musical surrogate languages. However, through the combination of the whispered words and the musical notes, it does not result in the reduction of phonemic contrasts typically found in speech surrogacy.

Further towards the margins are non-tonal jaw harp traditions, where the jaw harp acts simply as a vocoder or sound source for the otherwise normal articulation of words, though often poetic ones (Levin & Süzükei 2006; Proschan 1994). Apart from basic voicing, these cases involve no transfer of a linguistic feature to a non-linguistic modality, such as playing different strings to follow the f0 of a vocal melody. Thus, I would argue that they are cognitively quite different from other musical surrogate languages, even if they overlap in terms of acoustic outputs or communicative niches (e.g. jaw harps are often used for courtship in both tonal and non-tonal contexts). These traditions fall under the definition of musical surrogate languages taken in this paper, but I think it is noteworthy that it is almost exclusively systems like these that are found for non-tonal languages.

I do not mean to diminish any of these traditions that are further from the “core” of musical surrogate languages; all these forms of oral culture are still very much worth documenting (see Section 3.4 for further discussion of cultural value), but one should be aware of the different kinds of traditions that we find and what implications that has for the sorts of analyses and conclusions we can draw from them. Just as linguists engaged in language documentation ought to have some knowledge of linguistic typology to understand and contextualize their work, so too is it helpful to have a basic understanding of the typology of musical surrogate languages and other related traditions.

3. Why document musical surrogate languages?

In this section, I provide a few reasons why linguists should be interested in documenting musical surrogate languages. There is indeed an imperative to document these systems since many, if not most, are rapidly disappearing from communities around the world. Musical surrogate languages that were used for long distance communication are falling into disuse with the advent of cell phones and other forms of communication technology. Many more that were tied to traditional religious practices are being lost due to conversion (though in other cases, they can evolve into two practices, like the Ewondo slit log drums used to call the faithful to church, Neeley 1996). Still others are threatened by changes to traditional lifestyle, globalized music tastes, or even environmental changes that threaten natural materials used in instrument construction. But why should linguists (as opposed to anthropologists or musicologists) feel compelled to document them? Perhaps most obviously, because musical surrogate languages are systems of communication based upon spoken languages, it takes a linguist (or a speaker of the language) to have the necessary knowledge of the linguistic structures to understand the relationship between the spoken and musical forms.

Furthermore, since there are linguists working all around the world in different language communities already, we have the access and community ties to ask about these traditions. But we also have a lot to learn about spoken language, language communities, and local emic understandings of language through work on musical surrogate languages, as I will detail below.

3.1 Musical surrogate languages as ethnoscience

Musical surrogate languages are concrete manifestations of Indigenous theories of language. They show us which contrasts are the most salient to speakers, and how they are perceived and categorized. We can even learn how they are conceptualized as we engage speakers in discussions about these traditions. For instance, we saw in Section 2.1 that Yorùbá musicians have an emic understanding of vowel contrasts that is grounded in intrinsic amplitude and discussed in terms of “soft” and “intense”. This terminology relates to the speech sounds themselves. But in other cases, the instrument itself may shape the understanding of the speech sounds, as in the case of the Sambla balafon, where lower pitches are referred to as “high” because the gourds used as resonators are larger and thus keep the wooden bars of the balafon further from the ground, whereas higher pitches (called “low”) use smaller gourds which are closer to the ground, as can be seen in Video 1. For more discussion of cross-linguistic differences in pitch metaphors, see Sapir (1975) and Shayan et al. (2011).

Linguistics has long been interested in other areas of ethnoscience, such as ethnobiology (e.g., Shepard 1997; Si 2011) or color terminology (Kay & McDaniel 1978). It is thus surprising that linguists have not taken much interest in musical surrogate languages, seeing as these represent local scientific theories of language itself, which should be crucial in guiding and shaping our understanding of the languages which we study and document.

Indigenous theories of language are an important source of evidence for metalinguistic awareness at various levels of linguistic structure. For instance, as we will see in Section 3.2, the Sambla balafon surrogate language distinguishes between lexical and postlexical tone, exceptionlessly encoding the former while eschewing the latter. These strata have been proposed (or perhaps presupposed) in phonological theory, but it is important to find evidence for their psychological reality. The fact that balafonists do not encode contour tone simplification, a postlexical process, constitutes one such piece of evidence.

Surrogate languages also provide good evidence for the psychological reality of natural classes. Consider the case of vowel height: as noted, Yorùbá drummers (on both the dùndún and the bàtá) distinguish between high and non-high vowels (“soft” vs. “intense”), which aligns with the use of the phonological feature [high] in Western linguistic terms. Bauro drummers, on the other hand, distinguish between low and non-low vowels, which aligns with the phonological feature [low]. It would be interesting to investigate whether the choice between encoding [high] or [low] in the surrogate system (given that both languages also have mid vowels) is correlated with any other phonological processes in the language that might show that one or the other vocalic feature is more active or salient to listeners.

Though consonants typically play a far smaller role in surrogate speech than tones or vowels, the cases where they are encoded can also reveal information about natural classes, or the lack thereof. The Hmong ncas (jaw harp) makes a binary distinction between notes where the jaw harp is plucked and notes without a pluck. With some minor variation, plucked notes are all plosives and voiceless sibilants. In Western phonological feature theory, plosives form a natural class, but there is no natural class that includes plosives and voiceless sibilants but excludes other voiceless fricatives and voiced fricatives. While subconscious, the Hmong emic categorization of speech sounds into continuous and interrupted ones deviates from Western feature theory, but it is very likely grounded in a perceived acoustic trait (a distinct break in the voiced sound stream, perhaps?). Whether this can tell us anything about the behavior of spoken Hmong phonology, I am uncertain, but the deviation from natural classes is at the very least an interesting point raised by musical surrogate speech. Not all theories of language classify speech sounds in the same way, and treating musical surrogate languages as the ethnoscience of language allows us to consider linguistic structure in a new light.

3.2 Tonal analysis

As we saw in Section 2.1, the majority of musical surrogate languages are based on tonal languages, and tone is generally the primary basis of the surrogate system. Musical surrogates can be of enormous benefit in analyzing and understanding tonal systems, aided by musicians’ own conceptualizations of their categories and salient acoustic traits (typically f0, but perhaps also amplitude or duration, e.g., Rouget 1964). Linguists may be daunted by tone or feel that they cannot reliably hear tonal contrasts (McPherson 2019a). But these contrasts can be amplified when transposed to a musical instrument, especially one with categorical contrasts (unlike the continuous f0 of, say, a tension drum).

Even as a tonologist, I originally struggled with Seenku’s complex tone system. Based on other languages in the area, I expected a three-tone system, and indeed, most lexical contrasts do employ only three levels (as well as contour tones). However, this analysis left some alternations (and some lexical contrasts) poorly explained, as I struggled to understand why a putative Mid tone would sometimes surface as lower or higher than an adjacent tone. As I describe in detail in McPherson (2018), the balafon surrogate language provided evidence that Seenku employs four tonal contrasts rather than three, and that one of the alternations I failed to fully grasp is due to a contour tone simplification process, whereby a Low-Superhigh rise simplifies to Low in some contexts (which is higher than the lowest tone, Extra-low) and to downstepped Superhigh in others (lower than an adjacent Superhigh but higher than High). It was possible to hear this underlying form because Sambla balafonists distinguish between lexical/grammatical tone and postlexical/surface tone in the surrogate language: the former is always faithfully encoded, and the latter is almost never encoded.

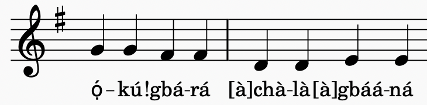

An instrument with continuous f0 like the Yorùbá dùndún can encode not just the phonemic contrasts but also phonetic details of tone, as I described in Section 2.1 above. This suggests that the encoding of tone—and what the linguist can learn about it—arises from an interplay between the language’s tonal system and the instrument’s capabilities. Fixed pitches and a complex tone system with four levels are likely to lead to phonemic encoding, as on the Sambla balafon; continuous pitch is more likely to lead to surface emulation of f0, as on the Yorùbá dùndún; a larger scale of fixed pitches and fewer tone contrasts allows the possibility of encoding downstep and downdrift, which we find on the Igbo ọ̀jà (Carter-Ényì et al. 2021; Eze & McPherson 2025). This last case is demonstrated in (5), taken from Eze & McPherson (2025):

- (5)

- Ọ́kụ́

- fire

- !gbárá

- burnt.REL

- àchàlà

- bamboo

- àgbááná

- burnt.COMP

- ‘The fire that burnt the bamboo has burnt it completely’

Igbo is fundamentally a two-tone language, though it also shows extensive (and contrastive) downstep of H tone as well as downdrift, in which the second H in a HLH sequence is lower than the first (Connell 2011). In this example, we see a tonal sequence H-!H-L-H, and this stepwise lowering is mimicked in the notes of the ọ̀jà, shown in Figure 7.

Gerald Eze plays a warning on the Igbo oja, corresponding to the text in example (5) and the musical transcription in Figure 7.

If, on the other hand, the language has more tone contrasts than available pitches, then the ways in which contrasts are maintained or neutralized can provide insight into their underlying representations and/or speaker’s awareness of them. This is the case on the Akan atumpan, a set of two barrel drums that encode the H and L tones of Akan (Bagemihl 1988; McPherson & Obiri-Yeboah 2024; Nketia 1971). Like Igbo, Akan makes use of a downstepped H, but there is no third distinct pitch category on the drums to encode this surface tone. Nketia (1971) states that it is simply encoded as H, but McPherson & Obiri-Yeboah’s (2024) analysis of a corpus of drummed phrases revealed that downstepped H is sometimes played as H, sometimes “unpacked” into an underlying LH sequence, and sometimes played as L, especially in phrase-final position, presumably due to the cumulative effect of phrase-final lowering and downstep.

The documentation and analysis of more tonal surrogate languages will allow us to further probe the relationship between tonal structure and instrumental constraints and look for any universal tendencies in how tone systems are mapped to the sounds of musical instruments.

3.3 Morphosyntactic structures

While it may appear that musical surrogate languages are solely the domain of phoneticians and phonologists, this is absolutely not the case. We can likewise find phenomena of interest to (morpho)syntacticians and those interested in morphosyntactic typology.

First, bridging the gap between morphosyntax and phonology, musical surrogate languages based on tonal languages can be expected to encode grammatical tone. West Africa is a natural testing ground for this hypothesis, as there exists a high density of both grammatical tone as well as musical surrogate traditions. Some examples of studies confirming the presence of grammatical tone in speech surrogacy include McPherson (2018) for the Sambla balafon, Akinbo (2021) for the Yorùbá gángan, Struthers-Young (2022) for the Northern Toussian balafon, and McPherson & Obiri-Yeboah (2024) in Akan speech surrogacy. Unfortunately, many morphosyntactic descriptions and analyses continue to omit tone marking (McPherson 2019a), making it more of a challenge to identify and confirm the presence of grammatical tone in musical surrogate language data. On the other hand, musical surrogate languages can offer an important tool for hearing and understanding grammatical tone contrasts, since they often render tone contrasts as discrete and more easily distinguished, as detailed in Section 3.1.

In most cases of a phonemic surrogate language, we expect the morphosyntax of the surrogate message to mirror that of the spoken language, and indeed, that is typically the case (e.g., Yorùbá, Igbo, Seenku, etc.). But we can also find cases where they differ in interesting ways, especially in enphrasing. First, Bora slit log drumming distinguishes between nouns and verbs in the surrogate message by means of conventionalized morphology. Nouns are marked with the suffix -úβù, which in the spoken language means ‘deceased’, though it does not carry this meaning in surrogate speech; verbs are marked with the suffix -ʔíhkʲà, indicating repeated action, in the spoken language, but again, it is devoid of these semantics in surrogate speech. Though tonally identical, they are rhythmically distinct, as the timing of drum strikes on the Bora slit log drums carefully tracks vowel-to-vowel intervals (Seifart et al. 2018). This shows how existing morphological structure can be co-opted for its rhythmic properties in surrogate speech.

Enphrasing also shows cases where morphological structure that is unattested in regular speech is used in the surrogate language. We find examples of co-hyponym compounds (compounds that combine two specific terms to refer to an overarching category) used to support enphrasing across multiple Bantu slit log drumming systems (Carrington 1949, described in James 2021), such as Lokele mbóli sango ‘news’ made up of two words for ‘news’ or Mbɔle tofulú ánɔtɔli ‘little bird’ made up of two kinds of little birds. What makes these cases particularly interesting is that Bantu languages do not generally have productive co-hyponym compounding, which shows that speakers are able to draw upon linguistic structures that are attested cross-linguistically, though not in their own spoken languages.

Another such case is found in Senegalese sabar drumming. Winter (2014) describes sabar drumming as likely a lexical ideogram system—there are but tenuous connections between spoken Wolof and the words and phrases played on the sabar drums (Ros 2021). In principle, a lexical ideogram system could follow the same morphosyntactic patterns as the spoken language, simply with arbitrary signs, but this need not be the case. An interesting divergence between the morphosyntax of spoken Wolof and the sabar drum system can be found in the plural. Wolof employs noun classes, and plural number is marked on the noun class determiner, e.g., singular definite bi vs. plural definite yi. On the sabar drums, however, pluralization is marked through reduplication (or retriplication) of the last drum stroke, as shown in the examples in (6) from Winter (2014: 658), where the syllables in the righthand column reflect onomatopoeia for different drum strokes:

- (6)

- a.

- weex bi

- weex yi

- white def.sg

- white def.pl

- rwe gin

- rwe gin gin

- b.

- rafet bi

- rafet yi

- beautiful def.sg

- beautiful def.pl

- gin tac tac tac

- gin tac tac tac tac

- c.

- tuuti bi

- tuuti yi

- little def.sg

- little def.pl

- pax ce gin

- pax ce gin gin gin

Like the Bantu co-hyponym case, sabar reduplication is interesting in that it draws upon a morphological process for plural marking—reduplication—that is typologically common but not attested in the musicians’ spoken language. One could use such an observation to make a bold claim that this provides evidence for universal grammar or other tendencies towards common linguistic structures.

Lexical ideogram systems remain virtually undescribed from a linguistic perspective. Documenting these systems could provide a rich source of data for probing how linguistic messages are structured when they diverge phonemically from the spoken language.

3.4 Oral literature and cultural importance

Oral literature is a common feature of documentary corpora. Folk tales, prayers, and songs are often among the first genres collected, and yet musical surrogate languages do not make this list, despite similarly being a form of oral literature. Like other genres, musical surrogate messages and songs are intimately intertwined with cultural institutions and societal structure, and so their documentation can also capture important aspects of the culture that may otherwise be missed.

Eze & McPherson (2025) describe the role of the Igbo ọ̀jà in the Mmọnwụ masquerade cult. The aerophone is used to send messages of warning to the masked spirit, such as that shown in (5) and Figure 7, as well as to shower praises upon titled men and women in the audience. These messages are poetic and rich in proverbs, and as such, they are only fully understood by intellectuals in Igbo society. They also draw upon other aspects of Igbo culture and metaphysics, such as numerology. For example, a common praise is ńwó!ké téghété ‘a man worth nine’, where the number nine is of spiritual significance, as a multiple of the already important and balanced number 3 (Umeh 1997).

Documenting the Sambla balafon as part of a larger project on Seenku (McPherson 2018, 2020) has taught me an enormous amount about Sambla culture that I would not otherwise have learned. For example, I learned that every family belongs to a different clan or totem that each has its own unique song (itself rich with proverbial lyrics). Whenever balafonists visit the house of that family, they must play that song. These songs could also be requested by family members at weddings or festivals where balafonists are playing. Similar songs exist for the chiefs of each Sambla village. Without documenting the surrogate language, I would likely have never learned about this aspect of Sambla societal structure.

The rich cultural content of musical surrogate languages, as well as its integration with other aspects of the culture (dance, religion, social relations, etc.), is one of the reasons why communities may take greater interest in the documentation of these systems as opposed to everyday speech. (Although it may be counterintuitive to linguists, music, it turns out, generates more interest than verb conjugation.) Indeed, at least in Africa, many Indigenous scholars have taken great interest in musical surrogate languages (particularly “talking drums”) and promoted their value as a source of knowledge about history, literature, and the arts. For instance, renowned Ghanaian musicologist J.H. Kwabena Nketia dedicated several articles and monographs to exploring the linguistic, musical, and literary contributions of Akan and other African drummed speech traditions (Nketia 1958, 1963, 1971). In Côte d’Ivoire, Prof. Georges Niangoran-Bouah proposed the field of drummologie as an anti-colonial endeavor, recognizing the technological and scholarly achievement that these drum systems represent (Arnaut & Blommaert 2009; Niangoran-Bouah 1980). Building upon this, in Burkina Faso, the writer Titinga Pacéré developed the notion of bendrologie for the study of drum literature in the Mooré language. In each of these cases, the tight connection between musical surrogate languages and their literary elements is recognized to be inseparable.

Thus, not only does the inclusion of musical surrogate languages in language documentation offer novel opportunities for understanding linguistic structure, it also opens up a world of oral literature, local history, and emic understandings of language science. The inclusion and promotion of this kind of Indigenous scholarship in language documentation represents a step towards anti-colonial linguistics, taking practitioners of musical surrogate languages, with their theories of cross-modal mapping, as linguists in their own right, rather than simply language consultants who provide data to Western science.11

On the flip side, the close connection between musical surrogate languages and Indigenous religious traditions can be one of the reasons why these practices are endangered or have already fallen out of use. Due to changing religious affiliations (or external pressures from religious groups), former practitioners may be hesitant to publicly play them. For example, in Igbo territories, Christian groups have invited priests and pastors to burn the giant slit log ikoro drums, which are sacred drums used for speech surrogacy (Eze & McPherson 2025). The destruction of these instruments and the musical-linguistic practices that depend on them represents the destruction of Indigenous science and ways of thinking. In other communities, musical surrogate traditions have adapted to new religious practices, as in the case of Ewondo drumming used to call the faithful to church (Neeley 1996). The researcher must remain sensitive to the cultural and spiritual implications of musical surrogate languages and the repercussions these have for recording and disseminating materials, as also mentioned in Section 4.1 and Section 4.6 below. If it is safe for all involved, linguists and language documentarians have an opportunity to act in solidarity with communities to preserve and promote the physical instruments and cultural practices that form the heart of Indigenous language science.

3.5 Genres and communicative ecology

A final reason to document musical surrogate languages is that they help us gain a fuller understanding of a society’s communicative ecology. Spoken language is just one piece of the puzzle, though arguably the dominant one. If we wish to understand how people communicate with one another (and with the physical and spiritual world around them) at different times and in different contexts, then seeking whether a community has instrumental communicative practices is of utmost importance. To understand their role in the communicative ecology, we must ask not simply how do they work, but also when, where, and why are they used? What speech genres do they represent? What impact does their use have on comprehension or discourse strategies? This can likewise help us understand why musical surrogate languages may be structured the way that they are.

I present here a case study from Hmong culture, which has surrogate traditions on a number of different instruments, each with its own communicative niche. The ncas jaw harp, discussed in Section 3.2, is used primarily for courtship (Catlin 1982; Mareschal 1976). Why would a culture use speech surrogacy for courtship? A first reason is that music, if not speech surrogacy, is routinely used in human cultures for courting, and musical surrogate languages are a form of music. A further reason, cited in Catlin and echoed by some of our Hmong consultants, is that using an instrument allows a form of dissociation between the player as an individual and their message, such that if the courtship is rejected, it can viewed as a rejection of the song rather than the self. But a convenient side effect of using an instrumental surrogate is that it disguises the voice of the lover from others who might be listening in.

So why use a jaw harp instead of a drum, flute, or xylophone?12 The sound of the jaw harp is quiet and intimate, and so it can only be properly heard in close proximity to the intended listener (rather than declaring messages of love across the hills). It is also small and portable, so can easily be tucked into a pocket and brought on (sometimes clandestine) courtship missions. I note also an interesting connection between speech surrogacy and a society’s material culture: jaw harps are only used for courtship in communities whose houses have bamboo walls, which allows the sound to easily pass through (Neng Thao, personal communication, 2025). Our Hmong consultants describe that a young man would go to the house of the young woman he was courting and start playing on the other side of the wall from where she slept until she heard him. At this point, she could either ignore it (being so quiet, the instrument gives her plausible deniability) or she can begin to play in response. Lovers get to know the playing style of their partners, and so even if the ncas disguises the person’s voice to others listening in, the pair will be able to recognize each other.

As the exchange above suggests, the ncas can be used by both men and women, a relative rarity for speech surrogacy traditions in my own experience (most tend to be male-dominated). The genre of language that is encoded on the ncas is not regular everyday speech, but rather poetic verses around the themes of love and longing. This heightens the emotional impact of the messages and also helps the listener make better guesses about what is being said, since it restricts the hypothesis space to phrases that fall within those semantic and aesthetic bounds.

We can compare the use of the ncas to the use of the qeej, a free-reed mouth organ with six bamboo pipes (Catlin 1982; Falk 2004). The qeej is a considerably louder instrument that also produces polyphonic music by combining a drone tone with other musical notes. Catlin (1982) argues that this obscures direct tone-note correspondences to the extent that the qeej should be considered an encoding rather than abridging phonemic system in Stern’s (1957) terminology. According to Falk (2004), this more obscured relationship between language and music may be beneficial, since the instrument is used to encode a lengthy funeral repertoire, with messages that give instructions to the spirits of the deceased. In this sense, the messages are meant not so much for human listeners but rather for those in the spirit realm, and these “dangerous messages” could lead a living person’s spirit astray. This funeral repertoire is fixed and learned by rote, unlike the more creative poetic language used on the ncas. However, it should be noted that the qeej is also a popular instrument outside of funeral contexts, such as at New Years festivities. Thus, its messages need not be dangerous ones, but this louder more melodious instrument is considerably better suited to both contexts than the quiet (and more speech-like) ncas. Traditionally, only men play the qeej.

Meyer & Manfredi (2025) likewise note that “talking and singing with a same instrument can be robustly distinguished in performance thanks to rhythmic and melodic cues”, citing cases including the Bora drums (Seifart et al. 2018), Gavião instruments (Meyer & Moore 2021), and Yorùbá drumming (Durojaye et al. 2021), among others. This same distinction was noted for the Sambla balafon in Section 2.3, where speech genre had ramifications for tone encoding. We must thus remember that musical surrogate speech, even on a single instrument in a single culture, may be not monolithic. It may not represent a single genre, but may itself be multifaceted. Different settings or styles may involve different registers, rules of encoding, or types of language.

Apart from distinctions in speech genre (poetry, song, speech, chants, proclamations, etc.), musical surrogate languages can also differ in terms of their discourse structure. Many long-distance drumming traditions are meant to communicate one-way: They send out a message, but do not expect to receive a (real time) response. The message often follows a strict template to render it more comprehensible, as detailed for the Bora slit log drum (Seifart et al. 2018) and many of the Papuan garamut signaling systems (Niles 2010). More local musical surrogate languages can either be performance-oriented, as in the Hmong qeej, with no expected verbal interaction from bystanders, or conversational, as in the case of the Sambla balafon. In a performance, the balafonist can stop the music and converse with a member of the audience, the musician using notes of the balafon and the audience member speaking normally in Seenku (McPherson 2018). This conversational usage also means that the kind of speech encoded on the balafon in these instances is less poetic and song-like.13 In the Yorùbá dùndún drumming tradition, it is common for the drum to directly repeat and mimic the speech of a “hype man” or other participant, rather than being used conversationally (Akinbo 2021). The use of speech surrogates can also place limits on the syntactic complexity of messages (Sicoli 2016), likely to mitigate the difficulties in comprehension that arise from the reduced set of contrasts.

These examples show that, in the documentation of musical surrogate languages, it is important not just to record musical speech (and ideally analyze its connection to the spoken language), but also to document where, when, and why it is used, how the messages are structured (both in terms of genre as well as in their discourse practices), and how the system fits into a broader communicative ecology.

4. A brief “how to” guide

For the linguistic researcher who is willing and interested to document musical surrogate languages but doesn’t know where to start (or doesn’t feel fully equipped to do so), this section offers some brief suggestions. Obviously, this methodology can be adapted depending upon the needs, interests, and material capabilities of the researcher.

4.1 Working with musicians: Practical and ethical considerations

Knowledge of musical surrogate languages can be highly specialized, mastered by only a (sometimes small) subset of the population. In some cases, performance of musical surrogate speech may be their livelihood, and so the collection of these materials may mean something very different from the kind of data collection routinely done in language documentation. It can take years, or indeed a lifetime, of practice to understand the depths of the tradition, and these true masters can be highly sought after by the community; thus, it may be very difficult to work with these individuals, as their time is at a premium.

All of these factors come with financial and ethical considerations. Typically in language documentation, linguists compensate consultants for their time and participation, either through modest financial contributions, returning the time and effort on community projects, or gifts to individuals or the community at large. For expert musicians, however, who are sharing their craft (and their intellectual material—more on this below), higher payments are merited (and often necessary). The challenge for the researcher is to determine what is a reasonable payment based on local norms, the musician’s skill level and seniority, and the research budget. Here, there are no easy answers.14 I have found in my own work that the best course of action is to find musician collaborators who see the value in the documentary work and so are themselves stakeholders in its success. They too must be paid appropriately for their expertise, but when they understand the scientific (rather than media) motivations for the work and the researcher’s budget, then these payments tend to be reasonable. My general approach has been to pay musicians between two and four times what I would pay a regular speaker for the equivalent time of work. This standard acknowledges the artistry, expertise, and cultural value of what the musician is sharing. The exact amounts, as with any research compensation, depend upon the local economy, the relationship with the community, and the project resources.

Sometimes very well-known or senior musicians may simply be out of budget. I have had the experience of a musician (not based in the United States!) asking for over $2000 to film a concert, which was infeasible. In cases like this, my recommendation would be to identify skilled musicians (with the help of local community members) who are a bit younger or perhaps more scientifically inclined, even if they are not the recognized as being the top expert in the tradition. They still have valuable skills and insights, and when the limits of their knowledge are reached, it may be possible to negotiate a short session with a more senior expert (at a potentially higher cost) to fill any gaps. This also offers the opportunity to document how musical surrogate languages are learned and transmitted from one generation to the next.

Another consideration in recording musical surrogate languages is whether any of the information is secret, sacred, or only meant to be understood by a particular (e.g., initiated) sector of society. In such cases, it may not be appropriate to research the traditions at all, or there may need to be special provisions made for archiving or disseminating results. Close consultation with the community is needed to determine appropriate boundaries in these (and indeed all!) cases.

Finally, the researcher should remain sensitive to the potential implications of recording the musical tradition at all. For instance, in her work on recording Garlu folk songs in Nepal, Blumenthal (2007) found that the availability of recordings rendered the caste of musicians who traditionally played them expendable. There can thus be a delicate balance between documenting and preserving threatened oral literature and preserving the cultural ecosystem that has kept it alive.

For issues of intellectual property that arise when recording (and archiving) music, see Section 4.6.

4.2 Recording considerations

Linguistic training in recording techniques is tailored to the human voice. Musical instruments present a unique challenge, especially if the recording also includes a spoken interview. Many instruments, especially percussive ones, will be much louder than the human voice and so will result in extensive clipping if a microphone is set to capture speaking. If available, two (or more) microphones should be set up with different input levels, one best suited for the instrument and one for the human voice. The two recordings can either be mixed together later or both can be archived, so as to have access to both recorded streams.

Some instruments are used naturalistically in larger ensembles, but this presents a challenging recording environment. If the researcher wants to capture the whole musical performance, which allows for documentation of important aspects of participation structure and discourse rather than isolated messages, then ideally multiple microphones would be used for different instruments, with musicians spaced as far from one another as feasible to reduce cross-talk, microphones picking up input from other instruments. Figure 8 shows one such set up from fieldwork I carried out in Benin, where I also had the benefit of working with a professional sound engineer. (Would that we would always be so lucky in language documentation!) Here, having the musicians so spaced out was an unnatural set up for musical performance, but provided the best possible recording conditions while allowing musicians to play simultaneously. To avoid this situation, if one particular instrument is the target of research, then the microphone could be placed closest to this instrument so that its sounds would stand out above the rest.

Ultimately, it would be ideal to collect different kinds of recordings to answer different kinds of questions: To focus solely on the instrument as a means of surrogacy, i.e., to probe how the linguistic message is translated into musical performance, it is best to record it and its messages played solo, separate from a larger ensemble. This will be especially true for structured elicitation, discussed in Section 4.4. However, to capture participation structure both between musicians as well as between musicians and those around them (audience members, dancers, spectators, etc.), then wide angle video, little to no interference in terms of natural positioning of musicians, and separate microphones on the primary instruments of communication would provide the best data to showcase how the speech surrogate is naturally used.

4.3 Naturalistic phrases and naturalistic performance

In analyzing a musical surrogate language, we can ask three questions: 1. What does the instrument generally say, i.e. what kinds of messages does it naturally send in the culture? 2. How productive is the system? Could it, in principle, say anything? 3. How is the surrogate actually performed, and how does it fit into the community’s broader communicative ecology?

There are (at least) two ways to answer these questions, each with their pros and cons. The first is to conduct formal interviews with musicians asking about their usual repertoire of words and phrases; the second is to record natural instances of musical surrogate languages being used. The former makes it easier to address the recording considerations discussed in the last subsection, rendering the phrases easier to hear and analyze from a structural perspective, but this set up makes it very difficult to answer the third question—how is speech surrogacy actually performed? As noted above, if feasible, both kinds of recordings should be made.

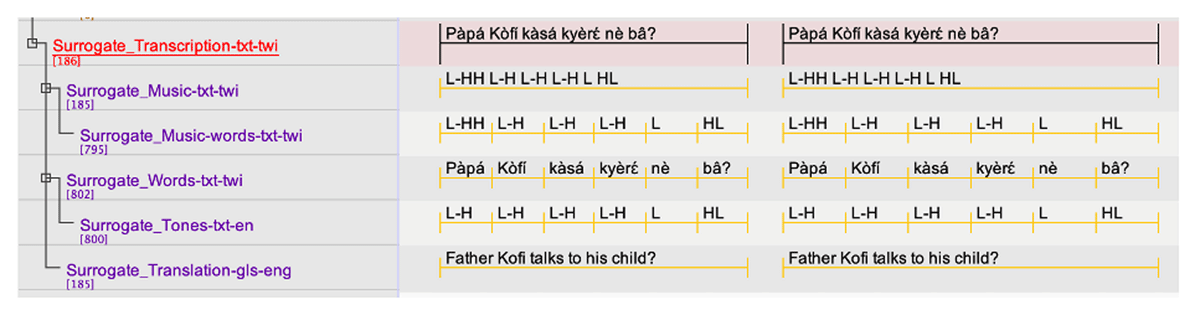

Let us first assume that we are staging a formal interview with musicians. Answering the first question involves recording naturalistic phrases that are part of the musical surrogate’s typical repertoire. This tends to be much easier for musicians than structured elicitation to probe productivity, discussed in Section 4.4. To record these phrases, I would set up an interview with the musician and their instrument and begin by asking about the contexts in which they play their instrument and what kinds of messages it can send. This offers the player the opportunity to demonstrate phrases and provides some answers to the third question of communicative niche. For structural analysis, it is of utmost importance that the phrase played by the instrument also be spoken out loud to determine the ways in which speech maps to music. Ideally, this would be done by the musician, since it is their understanding (and perhaps pronunciation) of a phrase that is being mapped to the instrument, but in cases where this isn’t possible, another speaker well-versed in the musical tradition could speak them instead. In Sambla culture, for instance, there are taboos against the musician speaking the words (McPherson 2018). Video 3 demonstrates this kind of back-and-forth for the Igbo ufie (slit-log drum). One should aim to have the musical phrases and spoken phrases repeated more than once to safeguard against errors or to capture variation.

Matthias Chinwendu Ugwoke, Michael Odome, and Lawrence Oke of the group Ozor Amauwenu demonstrate speech surrogacy on the Igbo ufie (slit log drum). This video was recorded in Nigeria in 2021 by Aaron Carter-Ényì and the Africana Digital Ethnography Project (ADEPt).

By conducting an interview with the musicians about cultural contexts and modes of use, the researcher has the opportunity to both learn about the uses of the instrument as well as to probe different kinds of phrases and messages. For instance, messages are likely to differ in a funeral setting vs. a marriage setting, and by talking about both contexts, there is the opportunity to demonstrate more phrases. For the language documentarian, conducting interviews of this sort in the target language also produces valuable narratives to enrich the documentary corpus (McPherson 2018). It is also likely to present new (musical) vocabulary to add to a lexicon.